Most digital marketing agencies have it wrong.

They focus on rankings, not revenue. All we care about is how much revenue and profit we can drive to your business by growing your traffic, boosting your conversion rate and scaling your sales pipeline.

As featured in..

How we help grow and automate your business

Digital Marketing Strategy

Before starting any online marketing campaigns, it’s best to know what you’re trying to accomplish, and have a crystal-clear plan for how you get there. Our digital marketing agency work starts with a free Online Marketing Growth Session, where we drill down into your situation and devise a marketing, sales and delivery blueprint for your business.

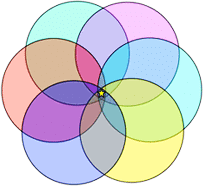

The Conversion Kaleidoscope is our proprietary framework for attracting, converting and monetising ideal clients.

Marketing Results offers a very professional service, clear goals and milestones set and delivered.

Drive More Traffic

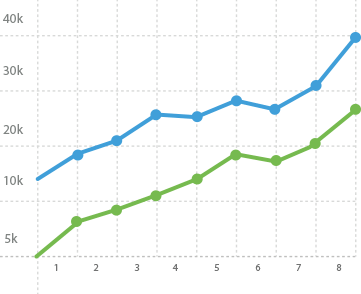

Once your online marketing funnel is in place, the next step is to drive more targeted traffic with Facebook Advertising, Google Ads and content-driven SEO. Our aim is to drive more, higher-quality traffic each and every month we work together so you can grow with confidence.

Capture More Leads With Landing Pages

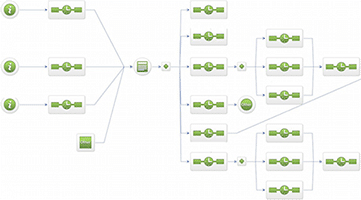

Once you have a steady flow of website traffic, we deploy high-end landing pages backed by marketing automation to convert visitors into prospects. Your ecosystem of landing pages, offers and automation grows your bottom line by working smarter, not harder.

Our Sales Lead Machine Blueprint converts shy prospects into lifelong clients. (Click to enlarge.)

Nurture Leads With Marketing Automation

Lead nurturing is the key to generating highly qualified sales leads who are ready, willing and able to buy from you. Lead nurturing strategies start with drip email campaigns and extend to audio and video content, direct response webinars and even direct mail.

Convert More Leads Into Clients

Website traffic and new leads are useless unless they convert into ideal customers and clients. We design and deploy Sales Process Automation systems that boost lead quality, increase client value and provide a great client experience, all while stripping cost out of your business.

A new “conversion-focused” landing page boosted leads by over 314% for one of our digital marketing agency clients.

I call it my conversion machine.

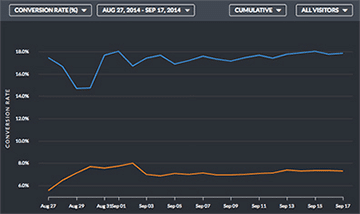

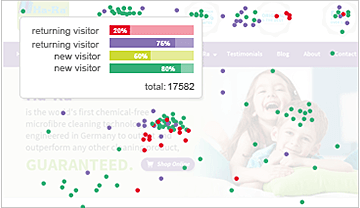

Heat mapping and split testing are just a couple of the digital marketing agency tools we use to scale your results.

Test, Tweak and Optimise

Testing, tracking and optimising is where most of the value of engaging a digital marketing agency lies. We provide dashboards and real-time reports that tell you what’s working and what isn’t, allowing you to rapidly improve and scale. Without access to this information, you’re flying blind.